Teleport Driven Ansible Dynamic Inventory

While there are a million ways to build Ansible inventory, what if we could build inventory using open-source access systems and not have to main multiple sources of truth?

Running a dynamic platform of servers, nodes, and applications is hard, especially when the environments are constantly changing. While there are a million ways to build inventory, what if we could build inventory using open-source access systems and not have to main multiple sources of truth?

In this post, I'll explain how I use Teleport as a dynamic inventory provider with Ansible.

TL;DR

- Run/Deploy Teleport

- Download Teleport Ansible inventory script

- Authenticate to Teleport cluster

- Create SSH config for Teleport

- Profit!

Read the documentation found within the inventory script for quick-start instructions.

Read this blog post for an overly wordy rundown of how this all works together.

The Basics

Teleport states it is "a certificate authority and identity-aware, multi-protocol access proxy..." which is a lot of highfalutin words.

Teleport controls access, and we can use its access capabilities to run commands like ssh on remote systems. From the user's point of view, very little needs to be done to make this work with Ansible

Ansible uses SSH > SSH uses Teleport > Teleport provides access

To make this unholy marriage work like Megan and Harry, users set an appropriate SSH configuration for their Ansible environment, define their required Ansible configuration, Authenticate, and run Ansible normally. The only new step in what I consider a very common Ansible workflow is the authentication requirement.

The benefits

A typical workflow for administrators is to create a static inventory file that maps to some set of resources. As you can imagine, static inventory files will bit rot, especially as they grow. When the rot has grown sufficiently, it comes an operator's nightmare, and we all want to avoid nightmares.

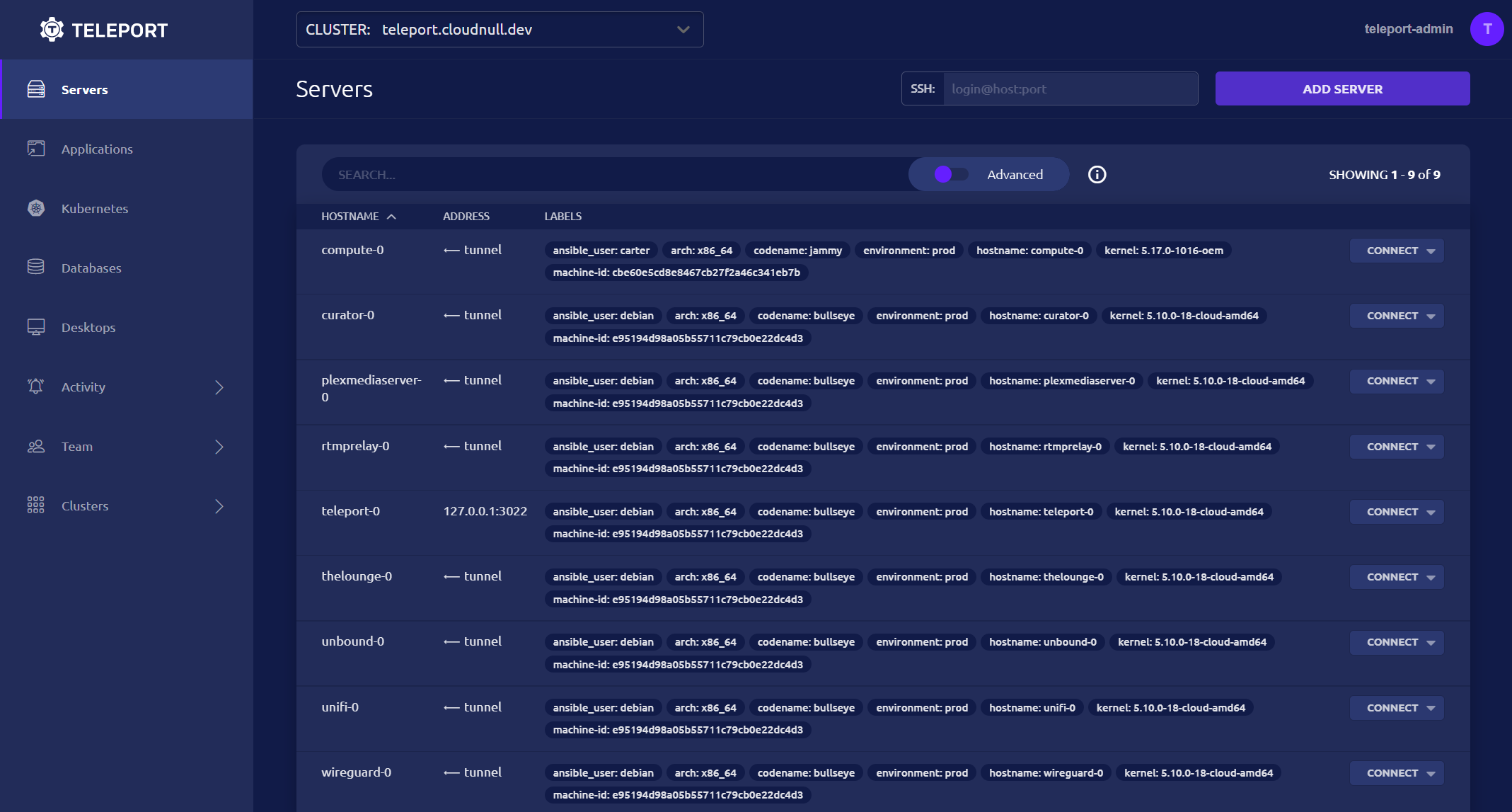

With Teleport, operators and administrators simply add nodes to their Teleport deployment. Labels are used to customize the nodes, and the teleport server takes care of transport and authentication. When operators need to run Ansible tasks across their environment, teleport-ansible provides a dynamic inventory source for Ansible that allows for native interactions.

Let's get started!

Overview

The setup for this inventory is effortless and follows the basic flow found in the normal Teleport documentation. This post assumes Teleport is up and running and won't get into the deployment details (that's a post for another day). The upstream Teleport documentation is good; you should read that if you're curious about getting started with Teleport.

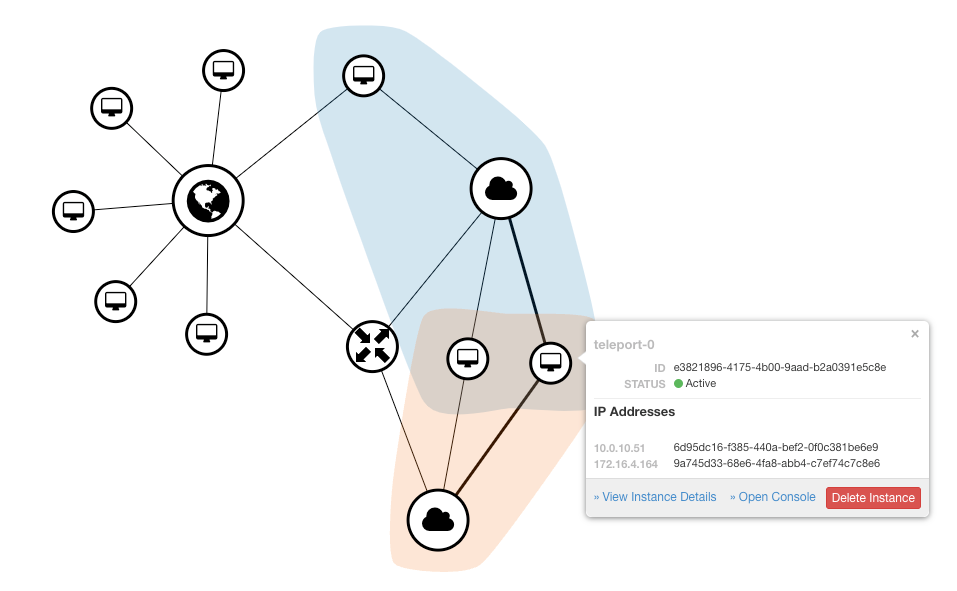

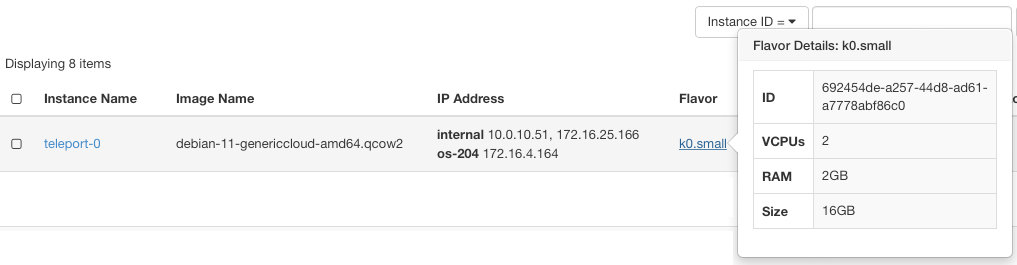

The above image is an overview of my current Teleport environment. I'm using Teleport for access to the nodes within my environment. For clarity, my home lab serves many roles, from WiFi, Plex, and DNS to engineering and development nodes which I require for work. Because my home lab serves so many roles, I've found Teleport incredibly useful. I can label nodes and use those labels to control access. The system is easy to manage and doesn't require a lot of managerial overhead. The SSO integration teleport offers lovely, allowing me to connect my upstream GitHub organization to my self-hosted Teleport server.

If you're thinking: "SSO on a home lab?! That's overkill..." You're right. Also my name is Kevin, nice to meet you.

The home lab network topology is quite simple. Teleport bridges my routers and can access my L2 and L3 networks.

This diagram exemplifies what is running and how I access machines through Teleport. While the nodes may host services that are accessible within my home network, there's no active SSH to these servers.

Getting the Inventory tooling

When it comes to getting the inventory tool / script, you've options. You can install teleport-ansible or download the inventory script directly. The beauty of a simple solution is the ability to choose your own adventure.

- Install the

teleport-ansiblepackage if you're someone who likes versioning, the ability to deploy via a package manager, and wants to contribute to an open-source project which benefits the community at large. - Download the script directly if you're dubious of packages and love the thrill of managing files.

Github Repository

PyPI Package

Downloading The Inventory Script

curl https://raw.githubusercontent.com/cloudnull/teleport-ansible/master/teleport-inventory.py -o teleport-inventory.py

Installing the above package or following the download instructions will get you everything needed to integrate Ansible with Teleport.

teleport-ansible will work on multiple clusters running both enterprise and open-source Teleport.Authentication

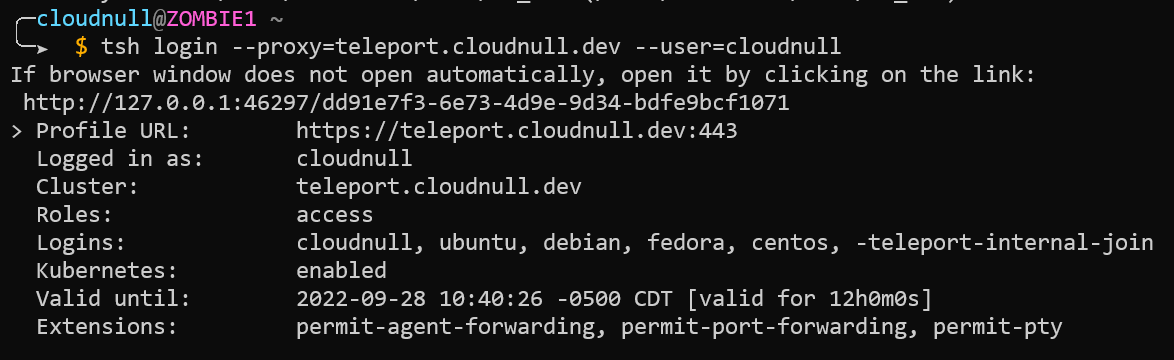

To work with Teleport via SSH, Ansible, or anything else, the user must first login to the cluster. Authentication is handled with the tsh command, which is part of the Teleport toolchain. As mentioned, this post assumes Teleport is running and that you have Teleport's toolchain installed on your systems. Check out the Teleport docs for more on the tsh command line utility.

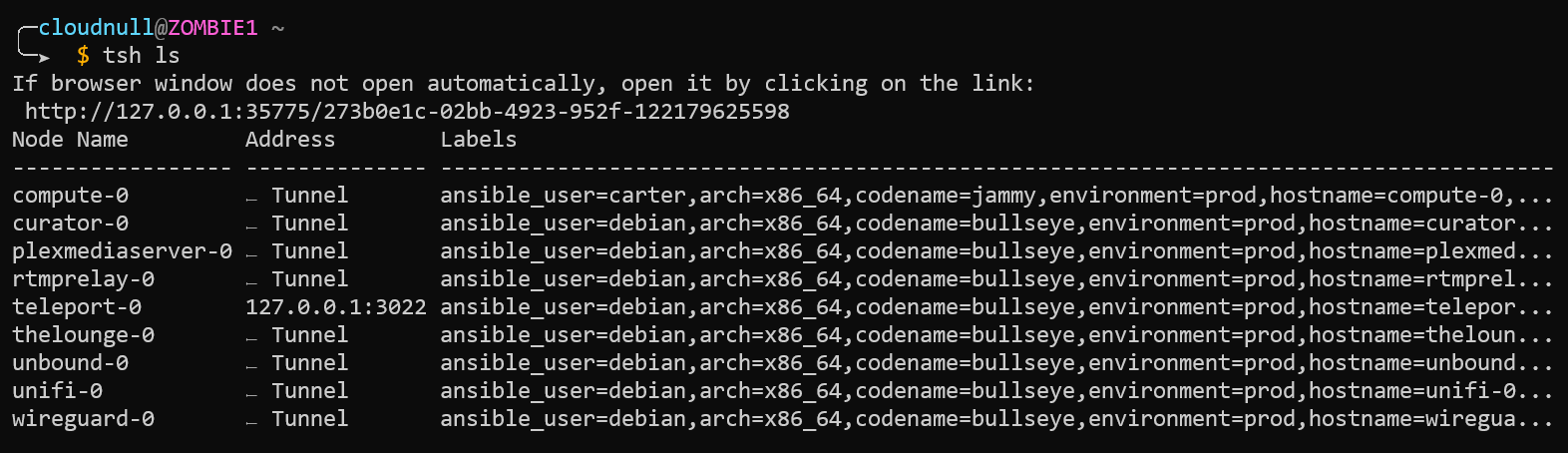

tsh login --proxy=teleport.cloudnull.dev --user=cloudnull. The login command will change based on your teleport environment. Additionally, it is possible to login to multiple teleport clusters simultaneously.Once you're authenticated into the Teleport environment, you can run tsh commands normally. For example, we can list our servers, providing output similar to what's seen in web UI.

tsh CLI list servers.Validating the Dynamic Inventory

Now that we're logged into the cluster, we can run teleport-ansible to validate functionality.

If you Installed the teleport-ansible package

teleport-ansibleIf you downloaded the script

python3 teleport-inventory.pyThe tool will execute and return JSON output.

The inventory JSON output is formatted such that it is interpretable by Ansible. To run Ansible commands with teleport-ansible, simply include teleport-ansible or the file entry-point in the Ansible commands.

teleport-ansible can be included on the CLI, in an ansible.cfg file, or via an Ansible environment variable.SSH Setup

Ansible works via SSH by default. To make SSH work through Teleport, we need to set up a simple configuration file that will do everything needed to proxy commands.

export TELEPORT_DOMAIN=teleport.example.com

export TELEPORT_USER=cloudnull

cat > ~/.ssh/teleport.cfg <<EOF

Host * !${TELEPORT_DOMAIN}

UserKnownHostsFile "${HOME}/.tsh/known_hosts"

IdentityFile "${HOME}/.tsh/keys/${TELEPORT_DOMAIN}/${TELEPORT_USER}"

CertificateFile "${HOME}/.tsh/keys/${TELEPORT_DOMAIN}/${TELEPORT_USER}-ssh/${TELEPORT_DOMAIN}-cert.pub"

PubkeyAcceptedKeyTypes +ssh-rsa-cert-v01@openssh.com

Port 3022

CheckHostIP no

ProxyCommand "$(which tsh)" proxy ssh --cluster=${TELEPORT_DOMAIN} --proxy=${TELEPORT_DOMAIN} %r@%h:%p

EOFThe above snippet will generate a new SSH configuration file named teleport.cfg. For the sake of simplicity, I've created a couple of environment variables in this example, which can be used to set the domain name of the teleport cluster and the user you wish to use when authenticating to Teleport.

Once the SSH configuration is in place, you can test access to the environment using this SSH config file with the following commands.

$ ssh -F ~/.ssh/teleport.cfg debian@teleport-0Ansible Configuration

The following configuration is an example of what could be done and is not required.

export ANSIBLE_SCP_IF_SSH=False

export ANSIBLE_SSH_ARGS="-F ${HOME}/.ssh/teleport.cfg"

export ANSIBLE_INVENTORY_ENABLED=script

export ANSIBLE_HOST_KEY_CHECKING=False

# This example is using the teleport-ansible package

export ANSIBLE_INVENTORY="$(command -v teleport-ansible)"

# You can also point inventory at the downloaded teleport-inventory script

# export ANSIBLE_INVENTORY=teleport-inventory.pyI setup everything as environment variables which can be saved in "run command" files. This same configuration can also be set in a ansible.cfg file as well. The world is your configuration oyster, so you do you, boo. The only hard requirement is getting the teleport SSH configuration into Ansible.

Executing Ansible

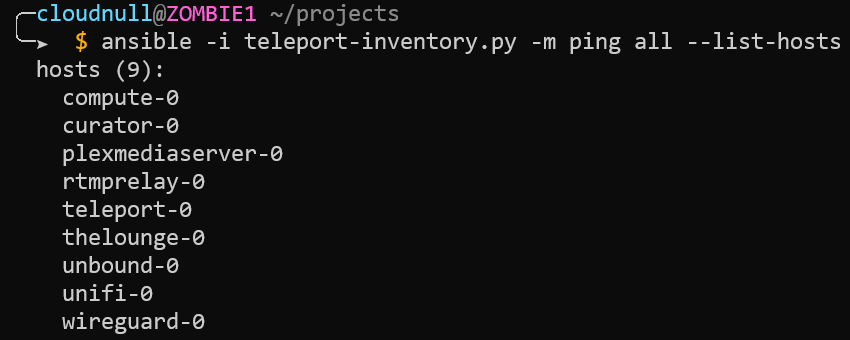

In this example, we list the hosts available to us via the dynamic Teleport inventory. As noted above, the names for our inventory match the names of the nodes found within Teleport. No IP address or domain information is used to provide connectivity. Additionally, I have no direct access to the machines. When I need terminal access to my lab environment, I must go through Teleport.

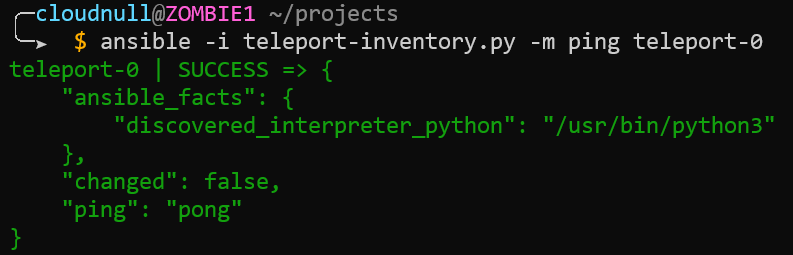

Next, we run a simple ping command to illustrate access to the nodes.

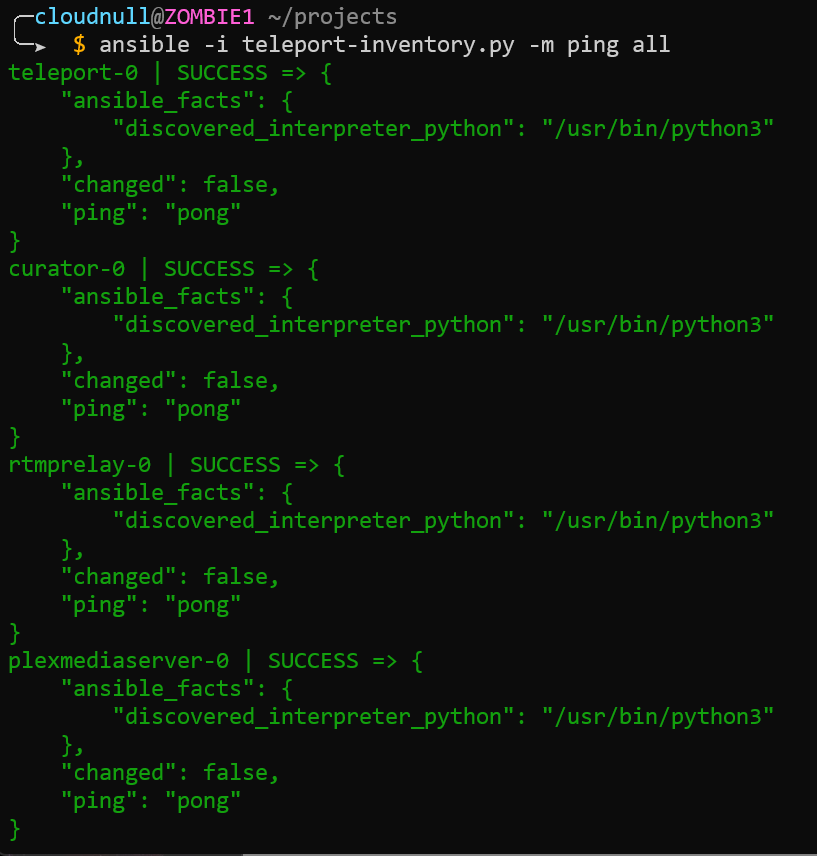

Next, we ping everything to highlight how we can leverage Ansible's parallel execution against our Teleport dynamic inventory.

I illustrate these simple examples because they're just that, simple. There's nothing special the user has to do to make them work. Teleport is serving both as an access and inventory system which allows Ansible to work normally in a fully dynamic environment.

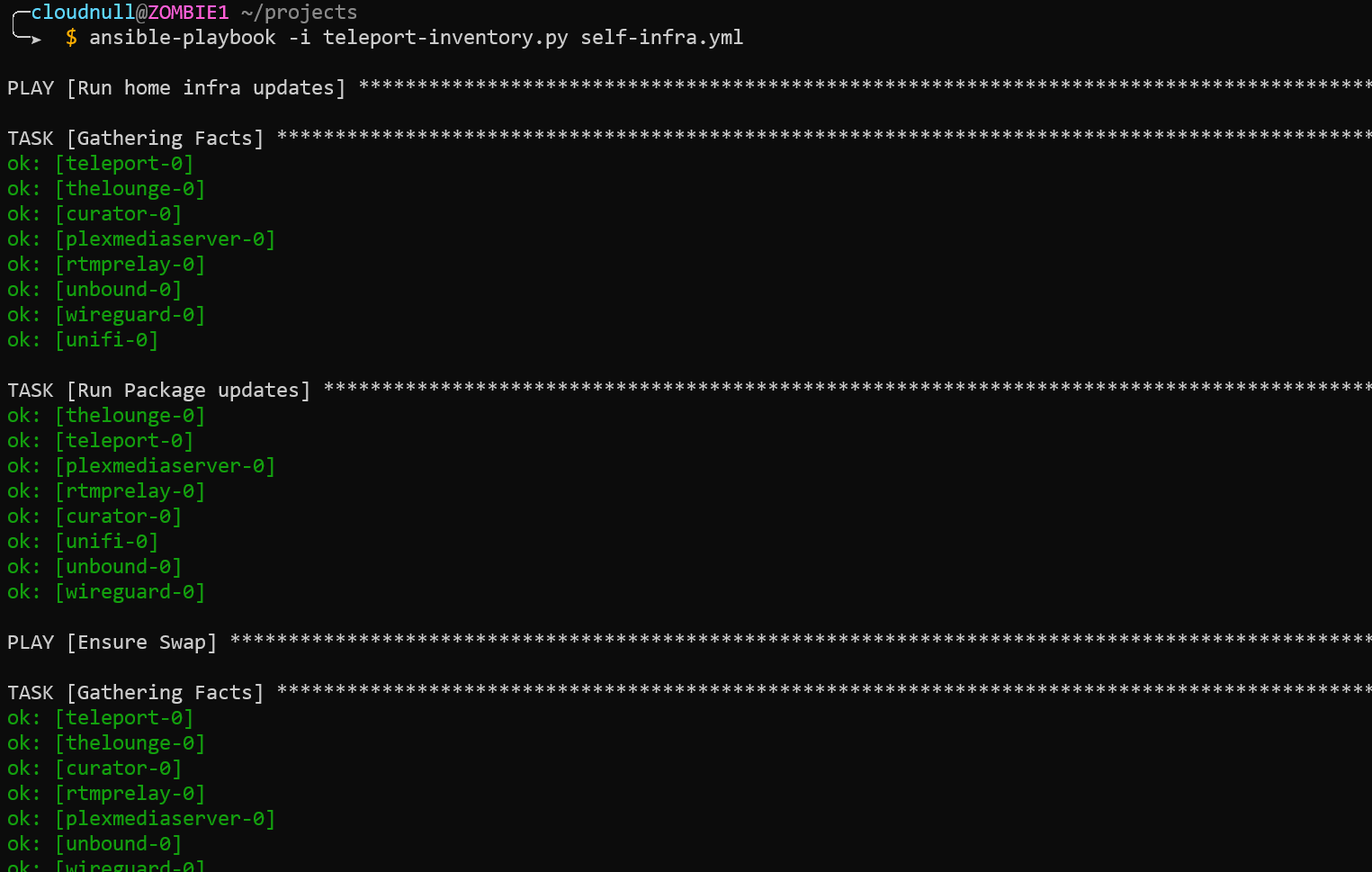

So far, we've just executed simple ad-hoc Ansible commands, but the same pattern can also be applied to running playbooks. Just include the inventory and run whatever you want.

Notes

You've read this far, so you might as well read some more. Here are extra thoughts about the setup, why I like it, and how you can extend it to make it work better for your environment.

The Teleport Dynamic Inventory

The teleport-ansible inventory is very simple and that's the point; simple inventory allowing operators to execute complex workflows. There are more lines of documentation in the repository than code. teleport-ansible will inspect the node details and store information found within labels; there's special handling for any label found to start with ansible_. Additionally, Labels will become groups allowing operators to make use of all sorts of Ansible native features.

The SSH Configuration

The example SSH configuration file is broad and must be tailored to your environment. The broadly scoped configuration file is used to show how easy things can be. It's worth mentioning again that no IP addresses were used or abused in making this post.

Teleport Authentication

When using a service like Teleport, having to authenticate to gain access to something is a fact of life. Some administrators will fight this notion as they're used to having this access via key exchanges or being GOD in their environment. Maybe these people just enjoy the thrill of managing authorized_keys files? While nobody can fix stupid, we can try and make transitions to systems like Teleport as easy as possible.

I use GitHub as my SSO, which I mentioned above. Having an SSO integration means I auth once daily. I've found SSO systems like this generally provide a simpler user experience. No need to type and retype passwords while fumbling for keyfobs every time I need to run commands in my environment. While I like the SSO model, Teleport also supports local authentication for folks who don't want or need such integrations. Local authentication will require more user input to perform a login but once logged in, operators will have the same great experience running commands for the given TTL.

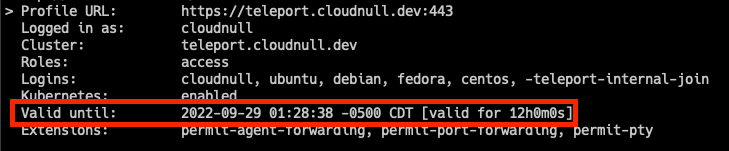

Teleport TTL

The TTL of Teleport is something that operators should be aware of. Ensure the TTL matches runtime expectations before running things that take a long time. The TTL can expire before a given job is completed, like running an Ansible playbook that takes hours to execute.

tsh TTL check.The Good

It works, which is good. If it didn't work, that'd be bad, and I probably wouldn't have written this post.

Feelings

I feel there's a scale limitation when using Teleport and Ansible in large environments; however, I don't have 10000 hosts, so I'm not sure where that limitation is. My feelings are based on past experience running Ansible in large data centers. I personally have struggled to balance forks with Ansible and native SSH, and I imagine the struggle would get real when funneling thousands of connections through a single proxy.

All that said, my Teleport environment is very resource-limited and hasn't exhibited any signs of struggle.

The Teleport node I have runs in a virtual machine sporting only 2GiB of RAM and has been serving roughly 30 nodes in my home lab. I beat the hell out of my environment, treating it like a pair of old work boots, and it has been flawless. While I don't have the ability to fully scale test teleport and trash it with Ansible, it seems the good folks behind Teleport have done some diligence in the area of scale testing. I recommend you look at the scaling documentation and build your environment using their resource recommendations.

Wrap up

That's it, that's all I could think to write. Teleport powering Ansible is awesome. If you liked this post or want to chat more about it, or anything else, ping me on discord or Twitter.

Teleport Driven Ansible Dynamic Inventory as seen through the eyes of #StableDiffusion #Ansible #Teleport https://t.co/vl9eBsFB8v pic.twitter.com/eSP8PBLtkA

— 凯文 Kevin Carter (@cloudnull) September 29, 2022